Most people start with Python because it reads like English, but there's a massive gap between writing code that works and writing code that is actually "Pythonic." You've probably spent hours writing a nested loop to filter a list, only to find out a seasoned developer could do the same thing in one line. That's not about showing off; it's about reducing the mental load required to maintain a project. When you use the right shortcuts, you stop fighting the language and start letting it do the heavy lifting for you.

To get started, we need to look at python tricks, which are idiomatic coding patterns and built-in features of the Python language that optimize performance and readability. Whether you are dealing with data science or web backends, these patterns turn clunky logic into elegant solutions.

Quick Wins for Daily Coding

- Unpacking: Swap two variables with

a, b = b, ainstead of using a temporary third variable. - F-Strings: Use

f"{variable}"for readable string formatting-it's significantly faster than%or.format(). - Enumeration: Use

enumerate()when you need both the index and the value of a list item, avoiding the uglyrange(len(list))pattern.

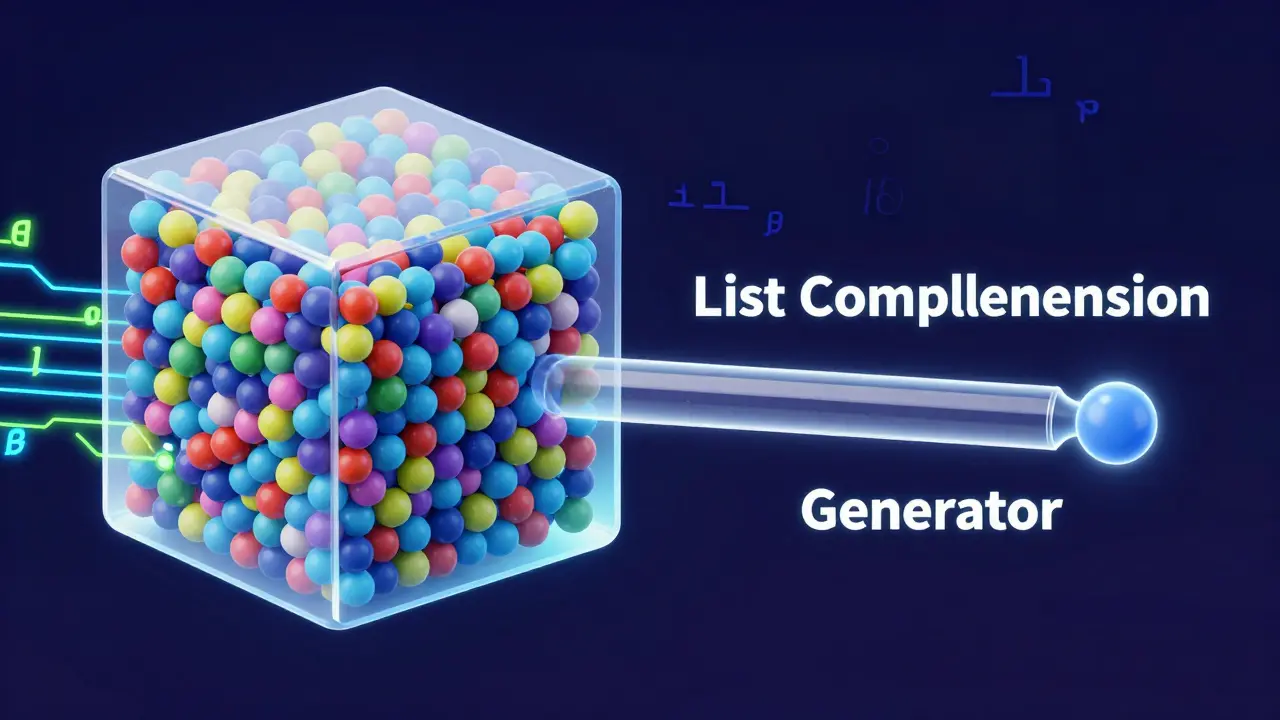

Mastering List Comprehensions and Generators

If you're still using for loops to create new lists, you're doing more work than necessary.

List Comprehensions are a concise way to create lists based on existing iterables. For example, if you need to square all even numbers in a range, you don't need five lines of code. A single line like [x**2 for x in range(10) if x % 2 == 0] handles the filtering and the transformation simultaneously.

But here is where most developers trip up: memory usage. If you're processing a dataset with 10 million rows, a list comprehension will try to shove all those results into your RAM at once, likely crashing your script. This is where

Generator Expressions come in. They look almost identical-replacing the square brackets [] with parentheses ()-but they use lazy evaluation. This means they yield one item at a time, keeping your memory footprint near zero regardless of the dataset size.

| Feature | List Comprehension | Generator Expression |

|---|---|---|

| Syntax | [x for x in data] |

(x for x in data) |

| Memory Usage | High (stores entire list) | Low (calculates on the fly) |

| Speed (Small Data) | Faster access | Slightly slower setup |

| Best Use Case | Small lists, repeated access | Large datasets, one-time iteration |

The Power of Decorators and Closures

Imagine you have ten different functions and you want to log how long each one takes to run. You could manually add timing code to every single function, but that's a maintenance nightmare. Instead, you use Decorators, which are functions that modify the behavior of another function without changing its source code. By wrapping a function in a decorator, you can inject logic before and after the main execution.

This relies on a concept called Closures. A closure is a function that remembers the environment in which it was created, allowing it to access variables from an outer scope even after that outer function has finished executing. This is essentially how Python implements a lightweight version of a design pattern, allowing you to create specialized functions on the fly.

Unlocking Magic with Dunder Methods

Ever wonder why you can use the + operator to join two strings or the len() function to count items in a dictionary? That happens because of

Dunder Methods (Double Underscore methods). These are special methods like __init__, __str__, and __getitem__ that allow you to define how your custom objects behave with built-in Python operators.

For instance, if you're building a custom Vector class for a physics engine, you don't want to call vector1.add(vector2). It's much more intuitive to write vector1 + vector2. By implementing the __add__ method, you tell Python exactly how to handle the plus sign when it encounters your class. This turns your custom objects into first-class citizens of the language, making your API feel natural to anyone else using your code.

Efficient Data Handling with Collections

Beyond basic lists and dictionaries, the

Collections Module provides specialized container types that solve common headaches. One of the most useful is defaultdict. Normally, if you try to access a key that doesn't exist in a dictionary, Python throws a KeyError. With defaultdict, you define a default type (like a list or an integer), and Python automatically creates the entry if it's missing. This removes the need for those repetitive if key not in dict: dict[key] = [] checks.

Then there is namedtuple. It gives you the memory efficiency of a tuple but allows you to access elements by name instead of index. Instead of remembering that user[2] is the email address, you can just call user.email. This significantly reduces bugs in large projects where data structures are passed through multiple layers of logic.

Common Pitfalls and How to Avoid Them

Not every trick is a good trick. A common mistake is the "mutable default argument." If you define a function like def add_item(item, list=[]), that list is created only once when the function is defined, not every time it is called. If you call the function multiple times, the list will keep growing across calls, leading to unpredictable bugs. The fix? Use list=None and initialize it inside the function.

Another trap is over-using one-liners. While a complex list comprehension looks clever, if it takes a teammate ten minutes to decode a single line of code, you've failed the readability test. The rule of thumb is: if a comprehension spans more than two lines or contains more than two levels of nesting, break it back out into a standard loop.

Are list comprehensions always faster than for loops?

Generally, yes. List comprehensions are optimized at the C-level within the Python interpreter, making them faster than manually appending items to a list in a loop. However, the performance gain is negligible for very small lists, and for massive datasets, a generator is better to avoid memory exhaustion.

When should I use a decorator instead of a regular function call?

Use a decorator when you need to apply the same logic to multiple functions across your codebase-such as authentication, logging, or caching. If the logic is specific to only one function, a regular call is cleaner. Decorators keep your business logic separate from your administrative logic.

What is the difference between __str__ and __repr__?

__str__ is meant to be a user-friendly string representation of an object, while __repr__ is meant for developers. Ideally, __repr__ should be unambiguous and look like the code used to create the object, making it invaluable for debugging in the console.

Do f-strings work in older versions of Python?

F-strings were introduced in Python 3.6. If you are working on a legacy system using Python 2.7 or 3.5, you will need to use the .format() method or the older % operator. However, for any project started after 2018, f-strings are the industry standard for speed and clarity.

Can I use multiple decorators on one function?

Yes, you can stack decorators. They are applied from the bottom up. For example, if you have @decorator_one over @decorator_two, Python first wraps the function with decorator_two and then wraps that resulting function with decorator_one.

Next Steps for Optimization

Once you've mastered these patterns, your next move should be exploring the itertools and functools modules. These libraries provide high-performance tools for iterating over complex data and managing function caching (like lru_cache). If you're doing heavy numerical work, moving from standard Python lists to

NumPy, which uses contiguous memory arrays, will give you speed boosts that no amount of "trick" coding can provide.