for loops to build simple lists or manually closing files, you're fighting the language rather than using it.

Quick Wins for Your Code

- Use list comprehensions to replace clunky loops.

- Leverage decorators to handle repetitive logic like logging.

- Switch to generators when dealing with massive datasets to save RAM.

- Apply f-strings for cleaner and faster string formatting.

- Use context managers to prevent memory leaks and file corruption.

Mastering Compact Data Handling

Let's start with the most common area where Python developers leave performance on the table: creating lists. If you find yourself initializing an empty list and then using .append() inside a loop, you're doing more work than necessary.

List Comprehensions is

a concise way to create lists based on existing iterables. It's not just about typing fewer characters; it's actually faster because it's optimized at the C level within the Python interpreter.

Imagine you have a list of prices and you only want the ones over $100, but converted to a different currency. Instead of five lines of code, you can do it in one. This approach keeps your logic tight and reduces the chance of introducing bugs during the iteration process. But be careful-if your comprehension spans more than two lines, it's time to go back to a standard loop for the sake of your teammates' sanity.

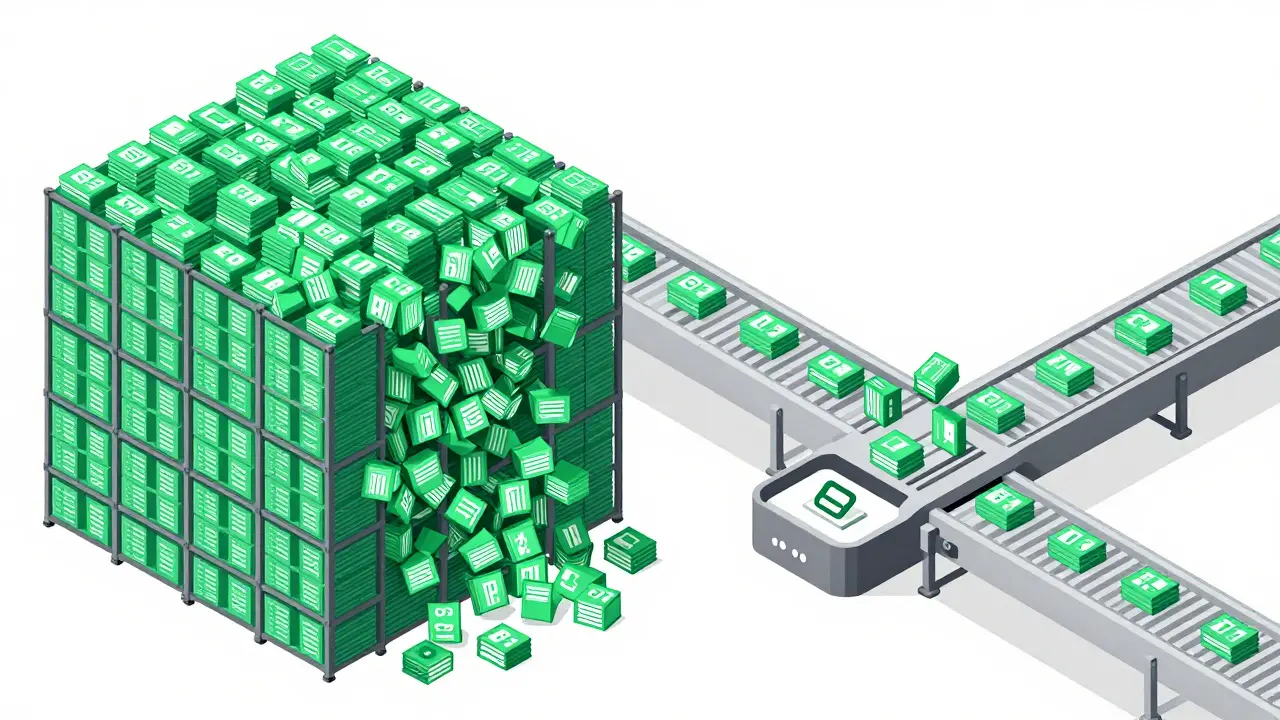

Then there are Generator Expressions. These look almost exactly like list comprehensions but use parentheses instead of brackets. While a list comprehension loads every single item into your RAM immediately, a generator yields one item at a time. If you're processing a 10GB log file, a list comprehension will crash your computer, while a generator will sip memory and keep the system stable.

| Feature | List Comprehension | Generator Expression |

|---|---|---|

| Syntax | [x for x in data] |

(x for x in data) |

| Memory Usage | High (Allocates all at once) | Low (Yields on demand) |

| Access | Indexable (e.g., list[0]) |

One-time iteration |

| Speed | Faster for small datasets | Faster for massive streams |

Wrapping Logic with Decorators

Ever noticed how you often write the same code to check if a user is logged in, or to time how long a function takes to run, across ten different parts of your app? That's where Decorators come in. A decorator is essentially a function that takes another function and extends its behavior without explicitly modifying its source code. It's a powerful implementation of the wrapper pattern.

Think of a decorator like a security guard at a door. The function inside is the room. The guard checks your ID (the logic) before letting you enter the room (executing the function). This keeps your core business logic clean and separates it from "cross-cutting concerns" like authentication, caching, or logging. In a real-world scenario, using a @lru_cache decorator from the functools module can turn a slow recursive function into a lightning-fast one by storing the results of expensive calls.

Efficiency with the Collections Module

Standard dictionaries and lists are great, but once you hit a certain level of complexity, they become clumsy. The

Collections Module provides specialized container datatypes that solve common problems. One of the most useful is defaultdict. Normally, if you try to access a key that doesn't exist in a dictionary, Python throws a KeyError. A defaultdict allows you to specify a default value (like an empty list) so you can append items to a key without checking if it exists first.

Another game-changer is Counter. If you need to count occurrences of elements in a list-say, the most common words in a customer feedback survey-don't build a dictionary manually. Counter does it in one line. It's an optimized tool that handles the tallying logic internally, making your code readable and your intent clear to anyone else reading your script.

Managing Resources Safely

We've all been there: you open a file, the program crashes, and the file remains locked by the system because you forgot to call .close(). This is why

Context Managers are non-negotiable. Using the with statement ensures that resources are cleaned up regardless of whether the code finished successfully or hit an error. It handles the "teardown" phase of the resource lifecycle automatically.

This isn't just for files. You can use context managers for database connections, network sockets, or threading locks. If you're building a high-traffic API, failing to close database connections will eventually lead to a "Too many connections" error, crashing your entire service. By wrapping these in with blocks, you guarantee that the connection is returned to the pool immediately after the task is done.

Slicing and Dicing with Advanced Indexing

Python's slicing syntax is one of its most underrated features. Most people know list[0:5], but few utilize the step parameter. If you want to reverse a string or a list, [::-1] is the fastest way to do it. It doesn't just look clever; it's highly efficient because it happens at the internal level of the language.

Pair this with Unpacking. Using the * operator allows you to grab the first element of a list and dump the rest into a separate variable. For example, if you have a list of search results, you can use first, *rest = results. This prevents you from having to use clumsy index slicing like results[0] and results[1:] separately, making the flow of data through your functions much smoother.

The Magic of f-Strings

If you are still using .format() or the old % operator, you're using slower and more verbose methods.

f-strings (formatted string literals) were introduced to make string interpolation intuitive and fast. They are evaluated at runtime, meaning you can put actual expressions, function calls, or math directly inside the curly braces.

Beyond simple variable insertion, f-strings allow for complex formatting. You can specify decimal precision for floats-crucial for financial apps-or align text for clean console outputs. Because they are parsed by the compiler rather than being a function call, they provide a noticeable performance boost in loops that generate thousands of strings per second.

Are list comprehensions always better than loops?

Not always. While they are faster and more concise, they can become unreadable if the logic is too complex. If you need multiple if statements or nested loops, a standard for loop is better for maintenance and debugging.

When should I use a generator instead of a list?

Use a generator when you are dealing with large datasets that don't fit in memory or when you only need to iterate through the data once. If you need to access elements by index or iterate multiple times, a list is the correct choice.

Do decorators slow down my code?

There is a tiny bit of overhead because you're adding an extra function call to the stack, but it's negligible in 99% of applications. The benefit of cleaner, modular code far outweighs the microscopic performance hit.

What is the difference between a set and a list in terms of performance?

Checking if an item exists in a set (using in) is O(1), meaning it takes the same amount of time regardless of the size. In a list, it's O(n), meaning it gets slower as the list grows. Use sets for membership testing.

Can I create my own context manager?

Yes, you can do this by creating a class with __enter__ and __exit__ methods, or more simply by using the contextlib module's @contextmanager decorator on a generator function.

Next Steps for Your Growth

If you've mastered these tricks, the next step is to explore the Standard Library more deeply. Look into the itertools module for advanced iteration and pathlib for modern file system paths. If you're working with data science, start moving from standard lists to

NumPy arrays, which offer vectorized operations that are orders of magnitude faster than any native Python loop.

Try auditing your current projects. Find one loop and turn it into a comprehension. Find one repetitive check and turn it into a decorator. The goal isn't just to write shorter code, but to write code that leverages the core strengths of the language to be more robust and efficient.